How Enterprises Use LLMs Effectively

Table of Contents

Do you find yourself overwhelmed by endless documents, customer inquiries, and data analysis tasks? Your team probably spends countless hours manually processing information that intelligent AI could handle efficiently.

Large enterprises today manage vast amounts of data, documents, customer interactions, and internal communications. You likely recognize this struggle within your own workplace environment. Handling this information efficiently often requires significant manual effort and complex systems that drain productivity.

Large Language Models (LLMs) for Enterprise represent powerful AI capabilities that allow organizations to process natural language effectively. These systems generate content, automate communication, and extract valuable insights from unstructured data sources. LLMs for Enterprise help organizations improve productivity, streamline operations, and unlock tremendous value from existing data.

This comprehensive guide will show you exactly how this transformative technology can revolutionize your business operations. You will discover practical implementation strategies that deliver measurable results and competitive advantages.

Table of Contents

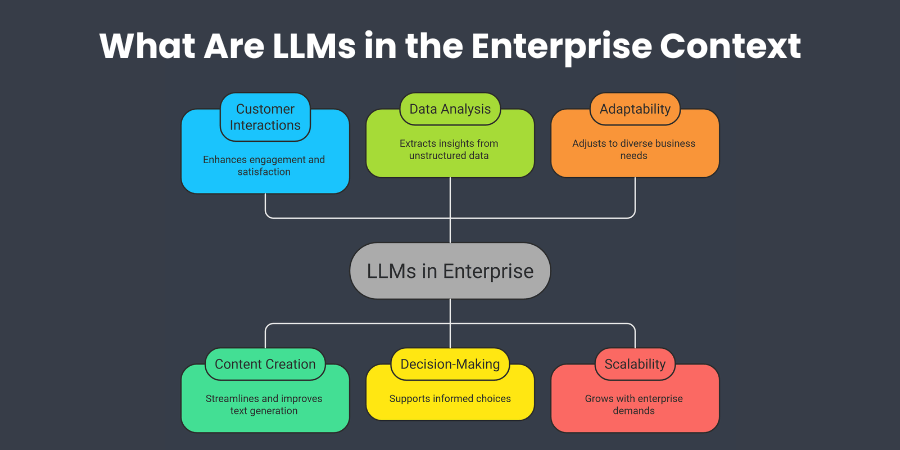

What Are LLMs in the Enterprise ?

Large language models are advanced AI systems trained on massive datasets that understand and generate human-like text. These sophisticated systems process complex language patterns and deliver contextually appropriate responses for business applications.

In enterprise environments, LLMs process large volumes of internal and external information efficiently and accurately. This capability enables automation across communication, documentation, and decision support systems that impact daily operations.

Common enterprise applications that deliver immediate value include:

- Automated customer support responses that operate continuously

- Document summarization and analysis that saves manual review time

- Knowledge management systems that retrieve relevant information instantly

- AI-powered internal assistants that boost team productivity

Enterprises deploy LLMs through APIs, private cloud infrastructure, or custom-trained models depending on requirements. Your deployment choice depends on security needs, performance expectations, and organizational infrastructure capabilities.

Core Technologies Behind Enterprise LLMs

Transformer architecture enables models to understand context within large text sequences for coherent analysis. This technology allows AI systems to maintain meaningful conversations and provide comprehensive document analysis.

Natural language processing interprets and generates language that feels natural to customers and employees. Machine learning training frameworks train large models on massive datasets, providing broad knowledge capabilities.

Retrieval-augmented generation systems connect LLMs with enterprise knowledge bases for accurate, contextual responses. These technologies work together to create practical functionality that addresses real business challenges.

Why Enterprises Are Adopting LLMs

Organizations invest in LLM technology to achieve measurable business outcomes that directly impact profitability. The primary drivers behind adoption focus on operational efficiency and competitive advantage creation.

You can automate knowledge-intensive workflows that currently consume significant employee time and resources. This automation allows your team to focus on higher-value strategic work that drives growth.

Improved internal productivity through AI assistance enables faster decision-making and better resource allocation. Enhanced customer engagement becomes possible when you deploy conversational AI that provides instant responses.

Extracting insights from unstructured data unlocks value from information assets you already possess. Many enterprises view LLMs as essential tools for modernizing legacy systems and enabling AI-driven decision support.

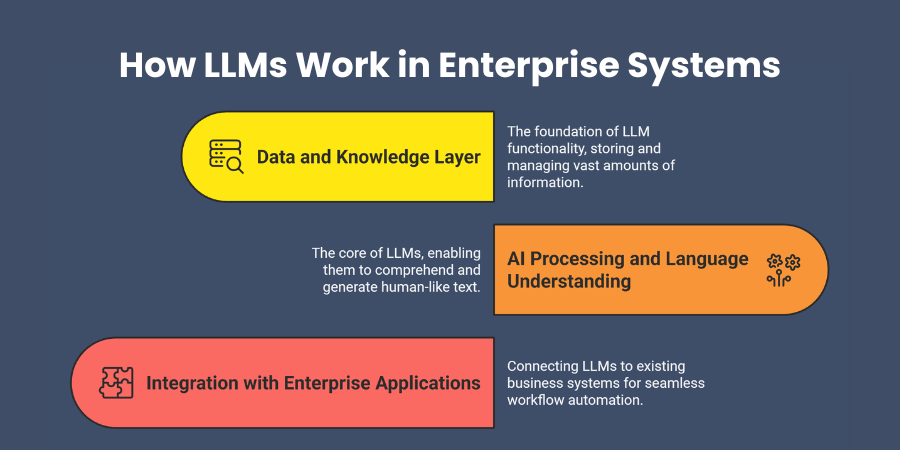

How LLMs Work in Enterprise Systems

LLMs operate within existing technology environments through integrated components that enhance rather than replace systems. Understanding these components helps you plan effective implementations that deliver sustainable value.

Data and Knowledge Layer

LLMs rely on enterprise data sources including internal documents, knowledge bases, customer interactions, and operational data. These datasets provide context that enables LLMs to generate relevant and accurate responses.

Connecting organizational knowledge to LLM systems creates powerful foundations for AI-driven insights and automation. Your system understands unique business context and terminology that improves response quality significantly.

AI Processing and Language Understanding

The model processes natural language inputs, identifies intent, and generates responses based on training patterns. Contextual data provided during inference ensures responses align with your specific business requirements.

Your system becomes increasingly effective as it processes more interactions and learns organizational terminology. This continuous improvement delivers better results over time without additional manual configuration.

Integration with Enterprise Applications

LLMs integrate with various enterprise platforms you currently use without disrupting existing workflows:

- Customer relationship management systems that enhance sales processes

- Enterprise resource planning platforms that streamline operations

- Document management systems that organize knowledge assets

- Collaboration tools and internal knowledge portals that boost productivity

These integrations enable workflow automation and employee support across all departments while maintaining operational continuity.

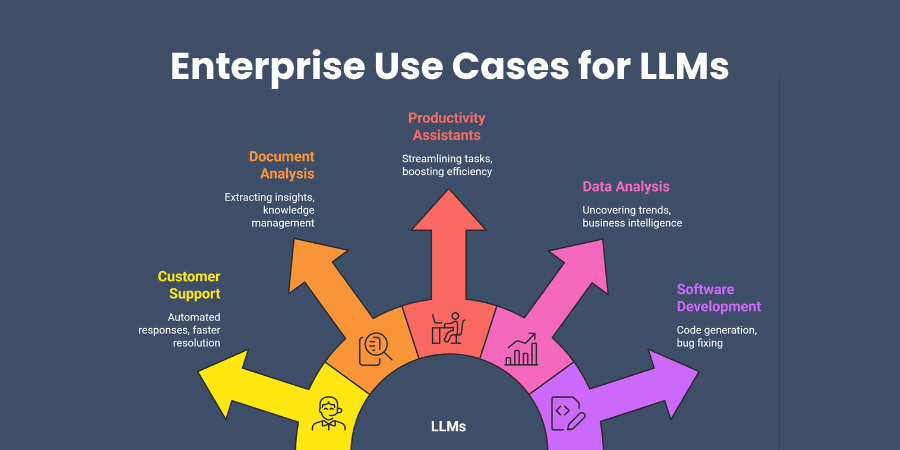

Enterprise Use Cases for LLMs

Real-world applications demonstrate how LLMs for Enterprise create measurable value across different business functions. These use cases provide proven frameworks for successful implementation strategies.

Customer Support Automation

LLMs power intelligent chatbots and virtual assistants that handle customer inquiries and provide product information. These systems resolve support issues instantly while maintaining consistent brand voice and service quality.

Key capabilities include automated responses, intelligent ticket classification, and conversational assistance that feels natural. You can dramatically reduce response times while improving customer satisfaction and reducing support costs.

Document Analysis and Knowledge Management

LLMs summarize documents, extract key information, and organize knowledge across enterprise repositories automatically and accurately. This capability transforms how organizations leverage institutional knowledge and accelerates information access.

Employees quickly access relevant insights from large document collections without manual searching or review processes. Your organization can scale expertise across teams and preserve valuable institutional knowledge systematically.

Internal Productivity Assistants

Enterprises deploy AI assistants that help employees draft emails, summarize meetings, generate reports, and retrieve information. These assistants understand business context and maintain appropriate communication styles for different audiences.

Team productivity increases significantly when routine communication and documentation tasks become automated and intelligent. Your employees focus on strategic work while AI handles repetitive knowledge tasks.

Data Analysis and Business Intelligence

LLMs interpret natural language queries and generate insights from structured and unstructured business data. This capability democratizes data access across organizations and accelerates decision-making processes significantly.

Anyone can access data insights without technical expertise or complex query languages. Your teams make faster, more informed decisions based on comprehensive data analysis.

Software Development Support

LLM-powered coding assistants help engineering teams write code, debug applications, and accelerate development cycles. These tools maintain code quality while improving developer productivity and reducing time-to-market.

Developers receive intelligent suggestions, automated documentation, and debugging assistance that enhances overall software quality.

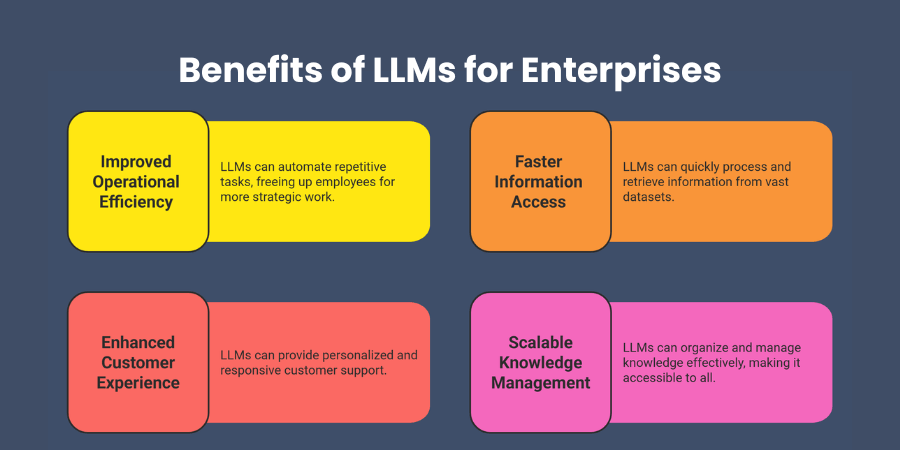

Benefits of LLMs for Enterprises

Measurable outcomes from LLM implementation demonstrate clear return on investment and operational improvements. These benefits justify technology investments and support business case development.

Improved Operational Efficiency

LLMs automate repetitive knowledge-based tasks, reducing manual workloads that consume valuable employee time. You will notice immediate productivity gains as teams focus on strategic initiatives.

Faster Information Access

Employees retrieve insights from large datasets using natural language queries instead of complex searches. This democratization of data access accelerates decision-making and improves organizational agility significantly.

Enhanced Customer Experience

Conversational AI systems improve responsiveness and personalization in customer interactions while reducing service costs. Your customers receive better service while support teams handle complex, high-value engagements.

Scalable Knowledge Management

LLMs enable organizations to organize and leverage large volumes of internal knowledge effectively and systematically. You can scale expertise across teams and maintain institutional knowledge more efficiently.

Challenges of Implementing LLMs in Enterprises

Successful implementation requires addressing key challenges through careful planning and risk mitigation strategies. Understanding these obstacles helps you prepare effective solutions and avoid common pitfalls.

Data Privacy and Security

Enterprises must protect sensitive information and comply with regulatory requirements when implementing LLM systems. This challenge requires robust security frameworks that protect confidential data while enabling AI capabilities.

You need comprehensive approaches to data handling, model deployment, and access controls. Security measures must address privacy concerns without limiting system functionality or user adoption.

Model Accuracy and Hallucinations

LLMs may generate incorrect or misleading outputs, requiring validation mechanisms in your implementation strategy. You should establish quality control processes that verify AI-generated content before customer delivery.

Validation systems ensure accuracy while maintaining the speed and efficiency benefits that justify LLM adoption.

Infrastructure and Cost

Running large models requires significant computing resources that impact technology budgets and infrastructure planning. You need to balance performance requirements with cost considerations for sustainable long-term implementation.

Cloud-based solutions can minimize initial infrastructure investment while you evaluate performance and cost implications.

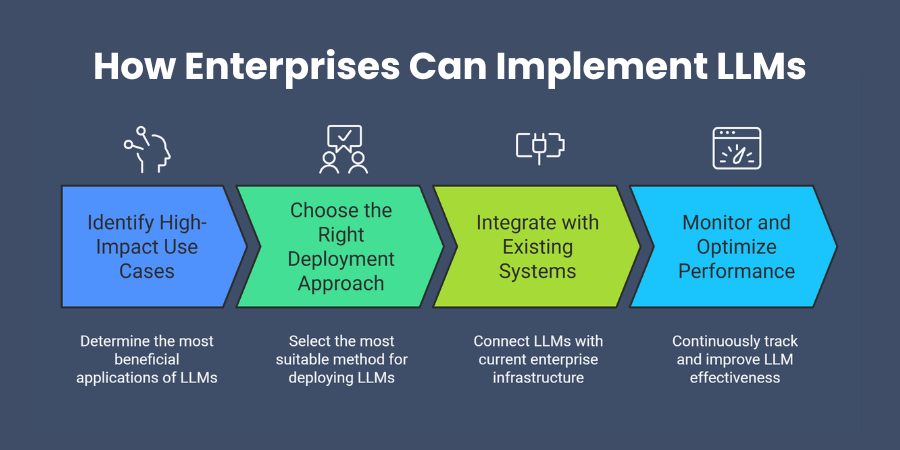

How Enterprises Can Implement LLMs[Image]

Successful implementation follows proven methodologies that minimize risk while maximizing value creation and user adoption. This practical roadmap guides you through essential implementation phases.

Identify High-Impact Use Cases

Focus on workflows involving large volumes of text, documents, or communication where LLMs deliver value. You should prioritize use cases with clear success metrics and strong business justification.

High-impact applications typically involve repetitive knowledge work that consumes significant employee time and resources.

Choose the Right Deployment Approach

Cloud APIs provide quick implementation with minimal infrastructure investment for rapid value demonstration. Private deployments offer maximum security and control for sensitive data and compliance requirements.

Fine-tuned models deliver specialized performance for unique organizational requirements and industry-specific terminology. Your choice depends on security needs, performance expectations, and available resources.

Integrate with Existing Systems

Connect LLM capabilities with enterprise applications such as CRM, ERP, and document management systems. This integration approach ensures LLMs enhance rather than disrupt current workflows and user experiences.

Seamless integration maintains user adoption while delivering AI capabilities through familiar interfaces and processes.

Monitor and Optimize Performance

Continuously evaluate outputs and improve models through feedback and data updates for sustained value. You should establish performance metrics that align with business objectives and user satisfaction goals.

Regular optimization ensures your LLM systems continue delivering value as business requirements evolve.

Future of LLMs in Enterprise

Emerging trends will shape enterprise AI strategies and create new opportunities for competitive advantage. Understanding these developments helps you plan long-term technology investments and organizational capabilities.

Enterprise AI Agents

LLM-powered agents will manage complex workflows across multiple systems with minimal human intervention. You will have AI assistants that complete entire business processes while maintaining quality standards.

Domain-Specific Language Models

Organizations will increasingly train models specialized for their industry or internal knowledge for improved accuracy. These specialized models understand business context more deeply and provide more relevant responses.

AI-Augmented Workforces

Employees will collaborate seamlessly with AI assistants to enhance productivity and decision-making capabilities. This partnership approach maximizes human creativity while leveraging AI efficiency and processing power.

How Shadhin Lab Enables Enterprise LLM Solutions

Shadhin Lab serves as your technology partner for implementing LLMs for Enterprise that deliver measurable results. Our expertise spans AI architecture, enterprise integrations, data pipelines, and scalable AI deployments.

You gain access to proven methodologies and best practices that accelerate implementation while minimizing risks. We understand unique challenges in enterprise AI adoption and provide customized solutions.

Our comprehensive approach addresses technical requirements, business objectives, and organizational change management for successful adoption.

Frequently Asked Questions

How much does implementing LLMs for Enterprise typically cost?

Implementation costs vary based on deployment approach, scale, and customization requirements significantly. Cloud solutions start at thousands monthly, while custom implementations require larger investments. Evaluate total ownership costs including infrastructure, training, and maintenance.

What security measures are necessary for enterprise LLM deployment?

Comprehensive security frameworks include data encryption, access controls, audit logging, and compliance monitoring systems. Your approach should address data privacy, model security, and integration security across touchpoints. Regular security assessments ensure ongoing protection.

How long does it take to see ROI from LLM implementation?

Most enterprises see initial productivity gains within three to six months of deployment. Full ROI typically materializes within twelve to eighteen months as adoption increases across organizations. Success depends on use case selection and implementation quality.

Can LLMs integrate with our existing enterprise software systems?

Modern LLMs integrate with most enterprise platforms through APIs and standard protocols effectively. You can connect LLMs to CRM, ERP, document management, and collaboration systems without infrastructure changes. Integration complexity varies by system architecture.

What skills does our team need to manage enterprise LLMs successfully?

Your team benefits from AI concepts, prompt engineering, data management, and system integration skills. However, deep technical expertise is not required for implementation with proper vendor support. Training programs can develop necessary capabilities quickly.

Conclusion

LLMs for Enterprise provide powerful tools to automate knowledge work, improve decision-making, and enhance customer experiences. Organizations that successfully adopt this technology position themselves for significant productivity gains and competitive advantages.

The key to success lies in identifying high-impact use cases and developing strategic implementation plans. Start with workflows that involve large volumes of text processing where LLMs deliver immediate value.

Your next step involves evaluating current business challenges and selecting appropriate deployment approaches for your requirements. The technology is mature enough for enterprise adoption, and the competitive advantages justify investment.

Begin your LLM transformation journey today by focusing on practical applications that address real business needs. You have the knowledge and framework needed to make informed decisions that drive success.

Shaif Azad

Related Post

AI Automation Use Cases to Boost Efficiency

Are you overwhelmed by repetitive tasks that consume hours of your valuable time each day? Businesses...

Generative AI for Startups: Growth & Innovation

Are you struggling to compete with industry giants while operating on a limited budget? You face...

How to Implement AI in Business Implementation

Are you wondering how your business can stay competitive in today’s rapidly evolving marketplace? Your competitors...